With organic search driving 53.3% of worldwide website traffic, SEO continues to be the backbone of sustainable online acquisition. Search remains the most dominant source of trackable web traffic. However, for massive enterprises, the stakes are even higher. A study from Search Engine Land highlights that when you are managing millions of pages, even a tiny technical error can result in thousands of lost conversions and millions in missed revenue.

This blog post addresses the unique hurdles of large scale SEO. If you feel like you are playing a never-ending game of “Whack-A-Mole” with your site’s indexation or content quality, you are in the right place. We will explore how to transition from manual tweaks to a robust enterprise SEO strategy that works while you sleep. This guide helps an e-commerce giant as well as global SaaS platform with the blueprint to dominate the SERPs at scale.

How do you scale SEO for a large website?

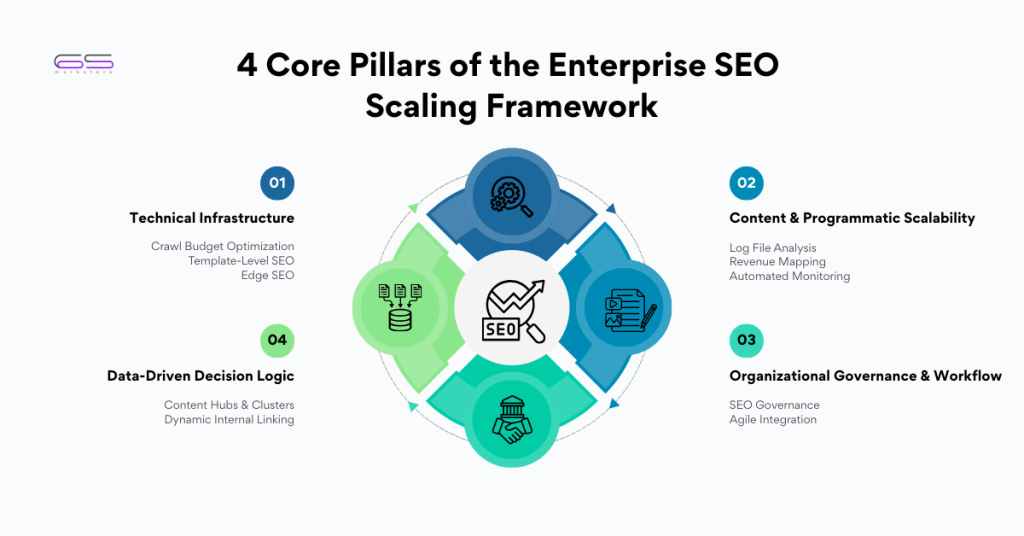

To manage and scale SEO for large websites, you must get rid of manual page-by-page optimization and leverage automation. The process has four important standards: optimizing your crawl budget, implementing a programmatic SEO framework for content, building a scalable site architecture with automated internal linking, and using AI-driven tools to monitor technical health across thousands of URLs simultaneously.

Key Takeaways for Large Scale SEO Success

- Prioritize Crawl Efficiency: Focus on crawl budget optimization to ensure Google finds your most important pages first.

- Think Programmatically: Use data-driven templates to create thousands of high-quality pages through programmatic SEO.

- Automation: Leverage SEO tools and scripts to monitor site health, broken links, and metadata at scale.

- Architecture: Build a flat, logical site structure to distribute link equity effectively.

Why Scaling SEO Is Unique for Large Websites

When you manage a small blog, you can hand-craft every meta description and check every link. However, when you tackle SEO for large websites, manual labor becomes your biggest enemy.

Scaling is not about doing “more” SEO; it is about doing SEO “differently.” On a site with 50,000 or 500,000 pages, you cannot afford to look at individual URLs. Instead, you must look at patterns, templates, and systems.

The complexity grows exponentially as your site expands. You aren’t just worried about keywords; you are worried about server logs, faceted navigation, and duplicate content caused by URL parameters. In this environment, an enterprise SEO strategy focuses on the “macro” rather than the “micro.”

You need to make a single change to a global header tor a database schema to influence thousands of pages. If you don’t shift your mindset toward systems thinking, your organic growth will eventually affect because of your own site’s complexity.

Common SEO Challenges for Large Websites

The biggest enemy of large scale SEO is “bloat.” As websites grow, they often accumulate “zombie pages”. These are the low-value content that doesn’t rank but still affects the search engine resources. When Google’s bots spend time crawling your outdated 2018 promotional pages instead of your new product launches, your revenue is in trouble. Therefore, crawl budget optimization plays major role in any large-scale operation.

Another massive concern is consistency. How do you make sure that 10,000 product descriptions has the same brand voice and SEO standards? Without a scalable process, you end up with “keyword cannibalization,” (multiple pages competing for the same keyword or the term, confusing Google and diluting your authority).

Furthermore, technical debt, like slow load times across specific categories, can hide in the corners of a large site, dragging down your overall domain ranking.

SEO Challenges vs. Scalable Solutions

| SEO Challenge | Scalable Solution |

| Crawl Inefficiency | Implementing crawl budget optimization via Robots.txt and Nofollow tags. |

| Duplicate Content | Automated Canonical tag injection and parameter handling in GSC. |

| Keyword Cannibalization | Mapping URL patterns to specific intent clusters in a master database. |

| Low-Quality Content | Using programmatic SEO templates with dynamic data pulls. |

| Manual Internal Linking | Creating “Related Links” widgets based on taxonomy and category data. |

Build a Scalable SEO Architecture

A solid foundation is the only way to support a massive structure. When designing an enterprise SEO strategy, your site architecture must be “flat” yet hierarchical. To be more precise, no important page should be more than three or four clicks away from the homepage. How do you do it or a site with 100,000 pages? You must use a logical category-and-subcategory system that Google can easily navigate.

Next, you need to consider your “URL taxonomy.” Your URLs should be descriptive and follow a strict pattern. This is important because this allows you to set up rules in your SEO tools to monitor specific sections of the site. If all your product pages follow the /products/[category]/[item-name] format, it makes you easy to pull a report to track if an entire category is underperforming. A scalable architecture enable both users and bots to know what they need without getting lost in a digital complexities..

Technical SEO for 1000’s of Pages

Technical SEO is where most large sites win or lose. When you have a massive footprint, crawl budget optimization becomes your top priority. Google does not crawl every corner of the internet. If your site is messy or not useful for the user, the bots will leave before they find your best content. You must use your robots.txt file to keep bots away from “junk” areas like search result pages, filtered views, or admin folders.

You should leverage XML sitemaps strategically. Don’t just dump 50,000 links into one file. Instead, break your sitemaps down by category or content type. This gives you granular data in Google Search Console, letting you see exactly which sections of your site are being indexed and which are being ignored. According to Search Engine Land, monitoring the “Index Coverage” report is vital because it reveals the “hidden” technical errors that only appear at scale, such as redirect loops or 404 spikes during a server migration.

Managing SEO for large websites needs a specialized efforts that most generic SEO agencies simply can’t manage. Connect with us today to see how we can streamline your organic growth.

Content & Programmatic SEO: Scaling Quality

You might think that creating content for 10,000 pages requires 10,000 writers, but that is a recipe for bankruptcy. This is where programmatic SEO changes the game. Programmatic SEO is the procedure of leveraging data and templates to create thousands of unique, high-quality pages. For example, a travel site might use a template to create pages for “Best Hotels in [City],” where the [City] variable pulls from a database.

The approach to successful programmatic SEO is making sure that each page provides unique value. You cannot just swap out one keyword, you need to collect unique data points, such as local weather, pricing, user reviews, or maps. This ensures that Google views these pages as helpful resources rather than “thin” content. When done correctly, this allows you to capture “long-tail” search traffic across thousands of variations, providing a massive boost to your overall large scale SEO efforts.

Internal Linking for Large Websites

Internal links are the “pipes” that distribute “link juice” (authority) throughout your site. On a small site, you can link pages manually. On a large site, you need an automated internal linking system. Internal linking includes creating “dynamic modules” that suggest or re-directs to related products, similar articles, or “people also viewed” sections. With these modules, every new page you publish is automatically given links from other powerful pages that exist already.

You should also consider using “breadcrumbs”. Breadcrumbs are not only used to help your visitors navigate your site but also help google bots through the pages internal link structures. They strengthen the organization of your site and form a network of links that connect back to your category pages. This strengthens the authority of your “money pages” while ensuring that even the deepest sub-pages are discoverable.

Automation, Tools & Workflows for Big Data

To effectively scale SEO for large websites, you must start leveraging SEO automation. Make use of the tools like Screaming Frog (for crawling), Ahrefs or Semrush (for scale monitoring), and BigQuery (for data analysis).

For many enterprise sites, standard SEO tools aren’t enough. You may need to write custom Python scripts to analyze your server logs or to automate the generation of meta tags based on page content.

Workflows are equally important. You need a bridge between your SEO team and your engineering team. SEO requirements should be baked into your development sprints. If a developer launches a new feature that accidentally adds noindex tags to 5,000 pages, you need an automated monitoring system to alert you instantly.

Automation ensures that your enterprise SEO strategy stays on track even when the human team is busy with high-level planning.

Conclusion

Scaling your organic presence requires a blend of technical precision, creative content strategies, and robust automation. As we have discussed, seo for large websites is about building systems that scale and ensuring that every page on your site serves a purpose. From crawl budget optimization to the power of programmatic SEO, the tools are at your fingertips to dominate your niche.

At 6S Marketers, we understand the nuances of large scale SEO. We have helped enterprise-level clients navigate the complexities of massive site architectures and technical hurdles.

If you are ready to take your website to the next level and stop leaving money on the table, our team of experts is ready to help. Contact 6S Marketers today for a tailored SEO audit and let’s start building your path to the top of the SERPs.

FAQs

1. How can you optimize a website which has millions of web pages?

Optimizing millions of pages requires a “template-first” approach. You don’t have to fix individual pages manually. You optimize the underlying code and data structures that generate them. Focus heavily on crawl budget optimization to ensure Google doesn’t get lost, and use programmatic SEO to maintain content quality across the entire domain.

2. What is the biggest SEO challenge for large websites?

The biggest challenge is usually indexation and crawl efficiency. When a site becomes too large, Google may stop crawling new or updated pages because of technical clutter, slow server responses, or a confusing site structure.

3. Is programmatic SEO safe for large sites?

Yes, programmatic SEO is safe and highly effective as long as the pages provide genuine value.

4. How often should large websites run SEO audits?

Large websites should perform “mini-audits” weekly using automated tools to catch broken links or server errors.

A

P